Recommended videos

Recommended videos

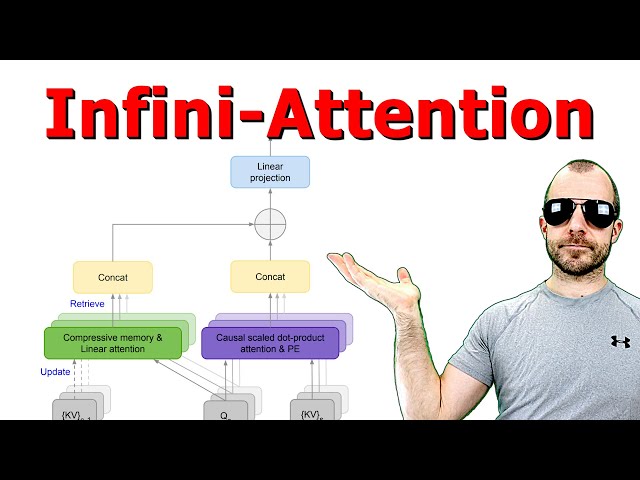

Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

44,398 views

10 days ago

RING Attention explained: 1 Mio Context Length

code_your_own_AI

32.4K subscribers

Tue, 16 Apr 2024 00:00:00 GMT

Tags

3 Comments