Recommended videos

Recommended videos

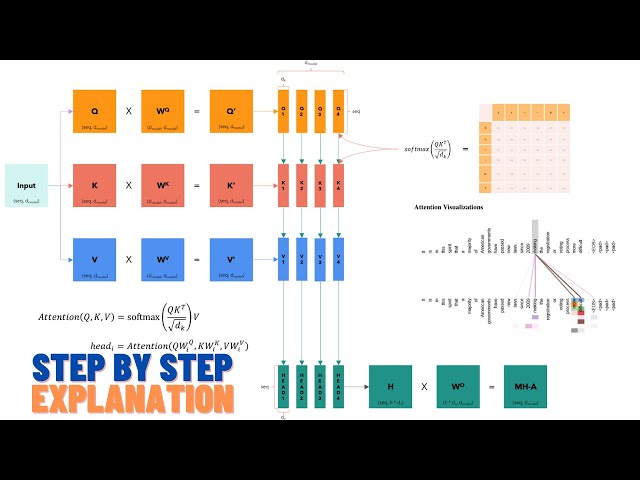

MIT 6.S091: Introduction to Deep Reinforcement Learning (Deep RL)

285,161 views

5 years ago

Reinforcement Learning (RL) explained (LLM, Vision, Robot)

code_your_own_AI

32.4K subscribers

Sat, 12 Aug 2023 00:00:00 GMT

9 Comments